If you’ve tried to build anything serious with AI in the last couple of years, you’ve probably hit the same wall everyone else has: “we have the data… but we’re not allowed to use it the way we want.” Legal and security teams don’t love the idea of production data being copied into dev environments, shared with vendors, or fed into every new model experiment. And honestly, they’re right – that’s how leaks happen.

This is exactly where synthetic data generation has matured. Instead of working with the real thing, you generate a copy that behaves like the real thing – with the same patterns, correlations, and edge cases – but without exposing actual people or transactions. Think of it as a “stunt double” for your data.

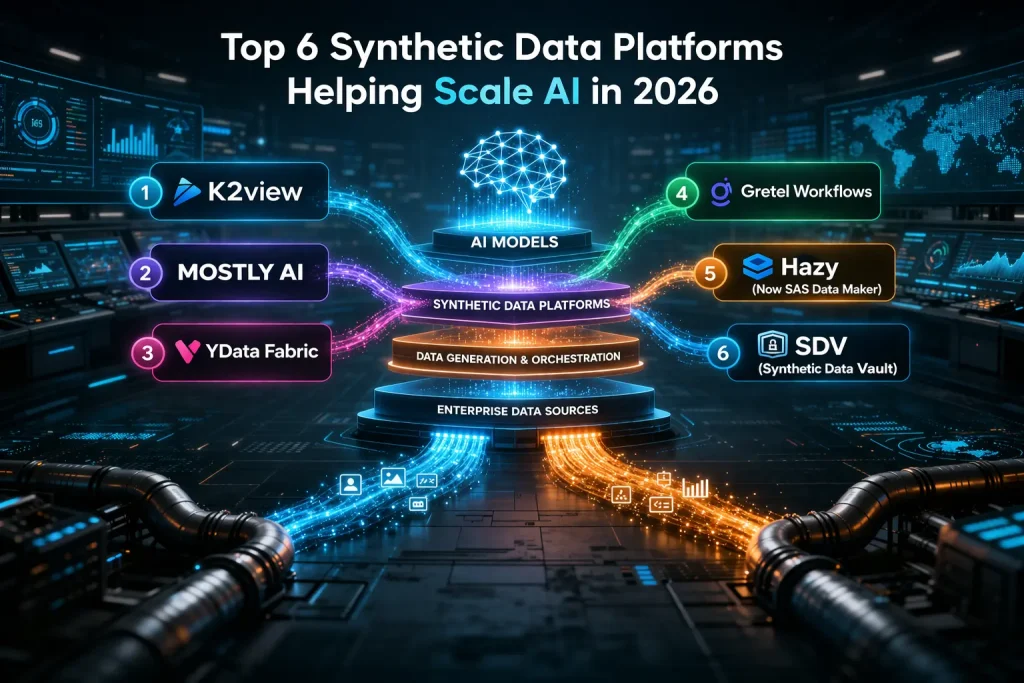

In 2026, synthetic data has moved from an interesting experiment to a practical requirement. Enterprise platforms now sit alongside open-source frameworks, each addressing different needs. Below is a practical, opinionated look at six synthetic data platforms actively used to scale AI today.

K2view

K2view is designed for large, complex enterprises – environments where data is fragmented across multiple systems, including legacy platforms that are difficult to access, yet still critical for testing and AI.

Rather than acting as a standalone generator, K2view manages the full synthetic data lifecycle – from source data extraction and subsetting to pipelining and synthetic data generation. Its architecture preserves referential integrity across systems, ensuring that synthetic entities remain consistent across applications.

K2view positions itself as a full-stack synthetic data platform, combining masking, anonymization, and synthetic data generation with integrated delivery into Dev/Test and AI pipelines.

A few things it does well:

- It combines GenAI-driven and rules-based generation methods.

- It includes extensive built-in masking and anonymization capabilities.

- It integrates directly into CI/CD and test data workflows rather than operating in isolation.

The trade-off is that it requires upfront planning and is not plug-and-play. It is best suited to enterprise-scale environments, and support coverage is strongest in Europe and the Americas. Once implemented, users consistently highlight fast, reliable delivery of usable synthetic data.

MOSTLY AI

MOSTLY AI targets teams that want high-quality synthetic data without deep technical overhead. Its interface is designed for accessibility, enabling analytics teams to generate realistic datasets without heavy engineering involvement.

It learns patterns from source data and generates privacy-safe synthetic versions, supported by fidelity metrics that measure similarity between real and synthetic datasets.

Where it tends to shine:

- Usable by analytics teams without constant engineering support.

- Fast time to value for generating usable datasets.

- Support for multi-relational datasets.

The limitation is reduced flexibility for highly complex or hierarchical data. Advanced users may find fewer controls compared to more technical platforms. For mid-size to large organizations, it offers a strong balance between usability and capability.

YData Fabric

YData Fabric reflects a data-centric AI approach, combining data profiling, quality assessment, and synthetic generation in a unified platform focused on improving ML outcomes.

It supports tabular, relational, and time-series data, making it suitable for diverse use cases such as fraud detection, customer analytics, and IoT data modeling.

Why organizations choose it:

- Identifies and resolves data quality issues before generation.

- Generates balanced datasets for improved model performance.

- Offers both no-code interfaces and SDK access.

However, it assumes a level of data science expertise. It is not designed for casual users, and while it supports privacy, it may not fully address all regulatory requirements by default.

Gretel Workflows

Gretel is built with developers in mind, focusing on embedding synthetic data generation directly into pipelines rather than exposing it as a standalone interface.

It enables scheduled and automated data generation across CI/CD workflows, supporting both structured and unstructured data with API-first integration.

Where it fits best:

- Teams with mature DevOps or MLOps practices.

- Environments requiring automated, repeatable data generation.

- Use cases where synthetic data must be continuously refreshed.

Its strengths lie in automation and integration, but it is cloud-dependent and best suited to technical teams. For less technical users, it may feel overly complex.

Hazy (Now SAS Data Maker)

Hazy, now part of SAS Data Maker, focuses on privacy-first synthetic data generation, particularly for regulated industries such as financial services and insurance.

It emphasizes differential privacy, anonymization, and governance, enabling organizations to safely share and use data under strict regulatory constraints.

Why regulated organizations adopt it:

- Strong compliance-focused design.

- Flexible deployment options, including on-premises.

- Maintains consistency across datasets while protecting sensitive data.

The trade-off is complexity. Implementation can be time-consuming, and the platform is better suited to large enterprises than agile or smaller teams.

SDV (Synthetic Data Vault)

SDV represents the open-source approach to synthetic data. It is a Python-based framework supporting multiple generative models, including CTGAN, CopulaGAN, and GaussianCopula.

It integrates naturally into data science workflows, offering flexibility without licensing costs.

Where it works well:

- Data science teams and startups.

- Research and experimentation environments.

- Organizations building custom synthetic data pipelines.

However, it requires manual setup and lacks enterprise-grade governance, support, and automation. Users must manage deployment, monitoring, and compliance independently.

What This Means for Scaling AI in 2026

Looking across these platforms, several trends are clear:

- Synthetic data is now operational – integrated into testing, development, and AI training workflows.

- Compliance is a primary driver – especially in enterprise platforms such as K2view and Hazy.

- Automation is becoming standard – with pipeline-driven delivery replacing manual processes.

- Data quality and balance are increasingly important – not just privacy protection.

- Different organizations require different approaches – from UI-driven tools to code-first frameworks.

For organizations evaluating where to start:

- Large enterprises with complex ecosystems tend to favor platforms like K2view.

- Mixed technical teams often benefit from MOSTLY AI.

- Data quality-driven AI initiatives align well with YData Fabric.

- DevOps-centric teams may prefer Gretel.

- Regulated industries typically look at Hazy.

- Technical teams often experiment with SDV.

The broader pattern is clear: organizations adopting synthetic data early move faster because they remove friction around data access and compliance. Synthetic data becomes a safe, shareable layer that enables experimentation, testing, and deployment without delays.

Synthetic data is no longer just a privacy workaround – it is becoming a foundational capability for scaling AI without increasing risk.